We are excited to announce that two research papers from Typhoon team have been accepted to EACL 2026.

This marks a major milestone that reflects our joint commitment to advancing open, inclusive, and impactful AI research for Thailand and the global NLP community.

Our accepted papers are:

Main Conference Papers

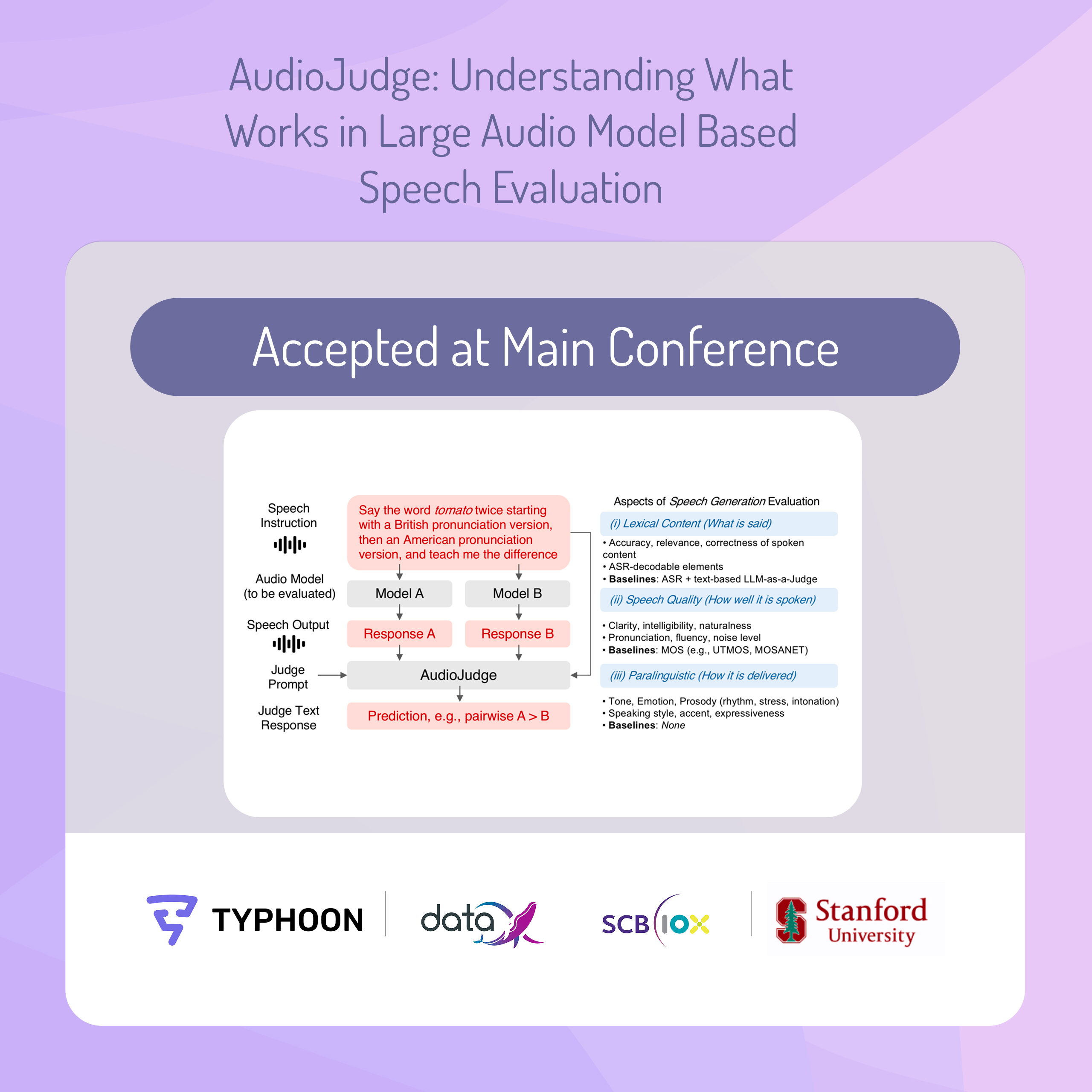

AudioJudge: Understanding What Works in Large Audio Model Based Speech Evaluation

Speech evaluation remains difficult for two reasons: it often depends on specialized systems for each individual audio characteristic, and many automatic metrics still correlate poorly with human preferences. AudioJudge explores whether Large Audio Models (LAMs) can serve as a more unified framework for evaluating speech across multiple dimensions.

Key idea:

- AudioJudge studies how large audio models can act as judges for both audio characteristic detection and system-level human preference simulation, instead of relying on separate evaluation tools for each task.

Approach:

- Evaluate LAM-based judging across several speech evaluation settings, including pronunciation, speaking rate, speaker identification, and speech quality.

- Study prompt design choices, showing that audio concatenation combined with in-context learning substantially improves performance.

- Introduce a multi-aspect ensemble AudioJudge that decomposes evaluation into specialized judges for lexical content, speech quality, and paralinguistic features.

Findings:

- AudioJudge provides a strong general-purpose framework for multi-aspect speech evaluation.

- The multi-aspect ensemble reaches up to 0.91 Spearman correlation with human preferences on system ranking benchmarks.

- The study also identifies key limitations, including verbosity and positional bias, even though robustness under acoustic noise remains strong.

This work helps clarify not only whether large audio models can judge speech well, but also what design choices make them work best in practice.

Extending Audio Context for Long-Form Understanding in Large Audio-Language Models

Large Audio-Language Models (LALMs) are often constrained by short audio context windows, even when their text backbones support long contexts. This severely limits their capabilities in long-form audio understanding. While context-extension methods such as YaRN exist for unimodal LLMs, their application to LALMs has remained largely unexplored.

Key idea:

- This paper introduces methods to extend audio context in LALMs without sacrificing the original model’s text capabilities. It proposes both a training-free method and a training-time strategy for handling much longer audio inputs.

Approach:

- Introduce Partial YaRN, a modality-decoupled extension method built on RoPE-based context scaling that modifies only audio token positions, while leaving text positions unchanged.

- Propose Virtual Longform Audio Training (VLAT), which turns this idea into a training-time positional augmentation strategy.

- Simulate diverse audio lengths during training so models can generalize to inputs that are far longer than those seen during training.

Findings:

- Partial YaRN consistently outperforms the original models across a wide range of settings.

- VLAT provides substantial gains on long audio inputs of unseen lengths.

- The results show that long-context techniques from text-only LLMs can be adapted effectively to the audio-language setting when designed carefully.

This work addresses a key bottleneck for real-world audio-language systems: enabling models to move beyond short clips with a training-free approach toward long-form understanding.

Looking Ahead

We are proud to see these papers accepted at EACL 2026. Together, they represent two complementary directions in audio-language research: better evaluation and longer-context understanding.

As audio-language models become more useful in real applications, both of these challenges matter. We need systems that can measure quality in ways that align with human judgment, and we also need models that can process long-form audio without breaking down. These papers are steps toward both goals.

We’re excited to continue building open and practical AI research for the community, and we look forward to sharing more soon.